09 - Types of Sound Software

Back to Overview

Table of Contents

There is an incredible amount of software out there to work with audio, both free and commercial. The software that is relevant for signal processing can be divided very roughly into three categories (with a lot of overlaps between them...):

- Wave editors

- Digital Audio Workstations (DAW)

- Algorithmic programming languages

These three types provide different tools for different purposes.

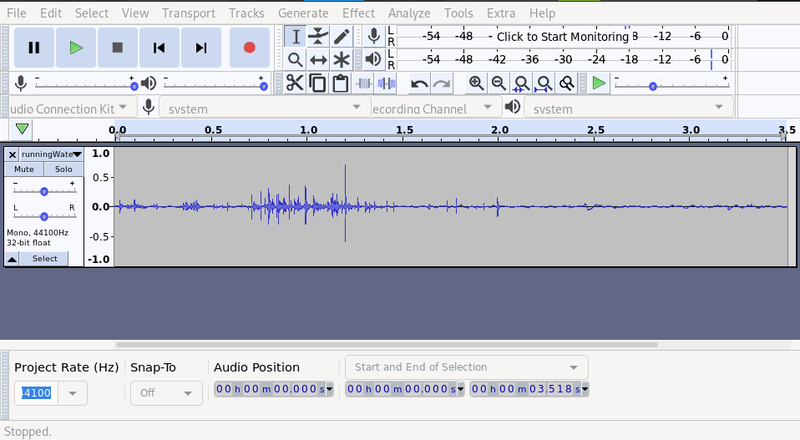

Wave Editors

The job of a wave editor is to work directly on sound files, for example to change the amplitude of a recording, or to split a big file into smaller chunks that can be loaded into a sampler. It is usually possible to do frequency analysis and noise reduction in a wave editor, as well as different kinds of frequency processing. Other typical use cases is to do final touches on a mix or convert from one format to another.

Examples: Audacity (free), Sound Forge (paid)

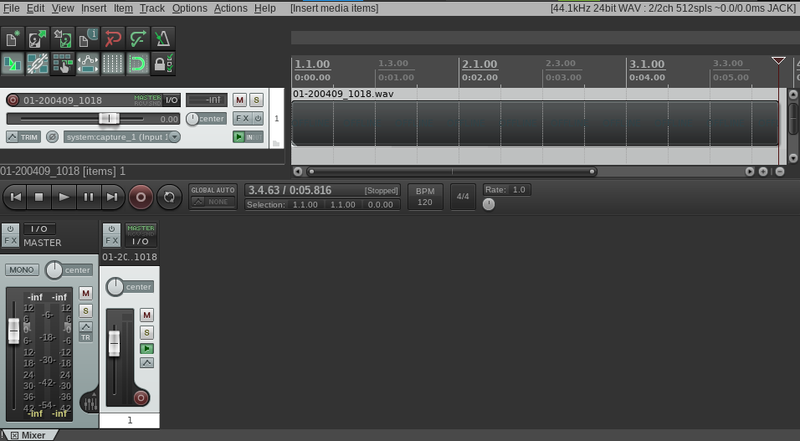

Digital Audio Workstations (DAWs)

Where a wave editor works on individual sound files, a DAW is a complete studio for recording sound, composing a piece of music, applying effects and exporting it as a finished product.

Examples: Ableton Live (paid), Reaper (paid), Ardour (free)

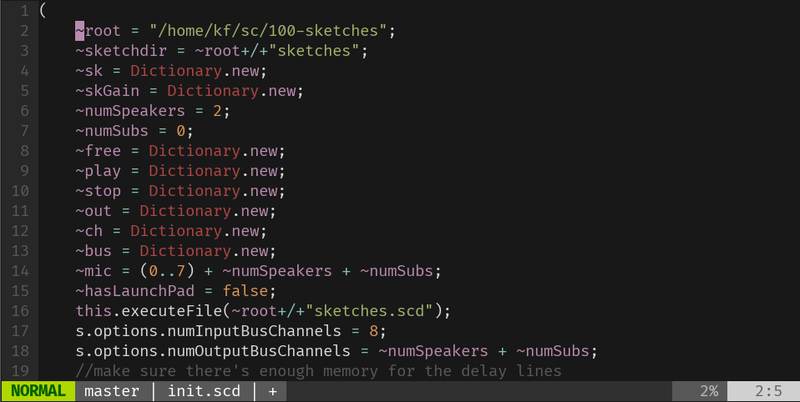

Algorithmic Programming Languages

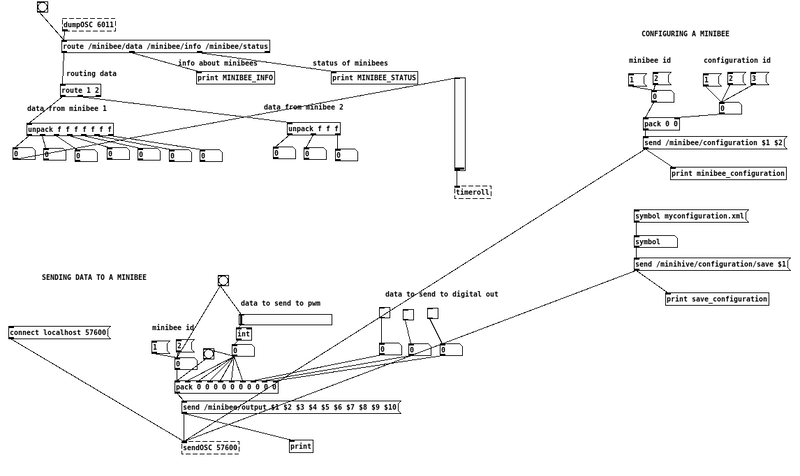

Generally a DAW will export the result as a WAVE file that will always remain the same no matter what happens later on. But what if you want your music to be different every time it plays back? Or if you want to trigger sound with movement sensors? For this you will need to dig under the surface and start programming yourself. There are two main branches of algorithmical programming languages: text and patching-based. A text-based language consists of plain text files sending messages to a sound server of some kind. A patching language consists of many small units that you can patch together to visually create your program.

Text-based examples: ChucK, SuperCollider (both free)