23 - Compression

Back to Overview

OK, so we have shaped the frequency content of our sound into something cool/beautiful/awesome/grungy. Now what? One thing you will notice when working with recordings rather than synthesized sound (sine waves, white noise, etc), is wild inconsistency of the amplitude. One moment the singer is whispering into the microphone, the next she is screaming her lungs out. In the good old days this would be solved by a very nervous sound engineer that kept a finger on the mixing console, riding it up and down to make sure the audience could 1) hear the star whispering and 2) didn't destroy their hearing when she hit that high C. Thanks to technology this process can now be outsourced to a process called compression.

A compressor is a machine version of our nervous sound engineer, pushing the amplitude up and down in response to the incoming sound to keep the output as steady as possible.

If you were to design such a machine you would need to figure out a few things. The most important ones are:

- How loud should it get before the amplitude is pulled down?

- By how much does it pull down?

- How fast does it react?

- And how fast does it return to the baseline amplitude?

- Finally, how much does it boost the amplitude when the level is low?

In a normal compressor these parameters are called:

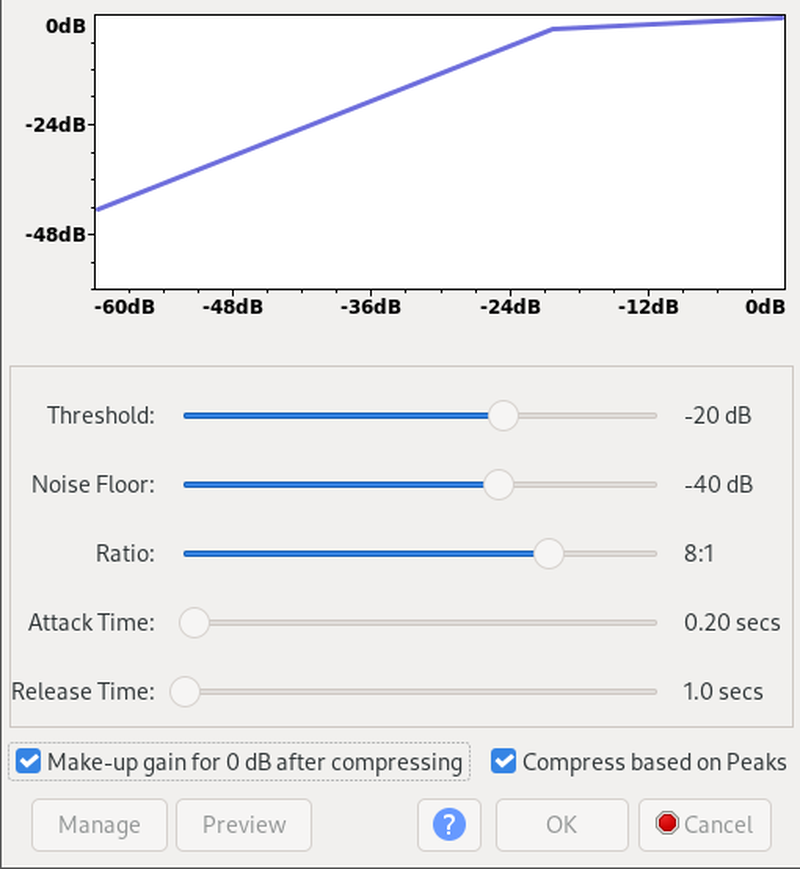

- Threshold, which is usually specified in dBFs, for example -4 dB

- Ratio, which is specified as a, well, ratio. 3:1 means that if the signal is 1 dB over the threshold, it will be pulled down to 1/3 of a dB.

- Attack time. How many milliseconds does it take from the signal exceeding the threshold until the compressor reacts?

- Release time. How many milliseconds to return?

- Make-up gain, mostly specified in dB. In Audacity the traditional make-up gain is replaced with the check-box

Make-up gain for 0dB after compressing. This means that the compressor will analyse the audio after compression and make sure the loudest parts of it are 0 dBFs.

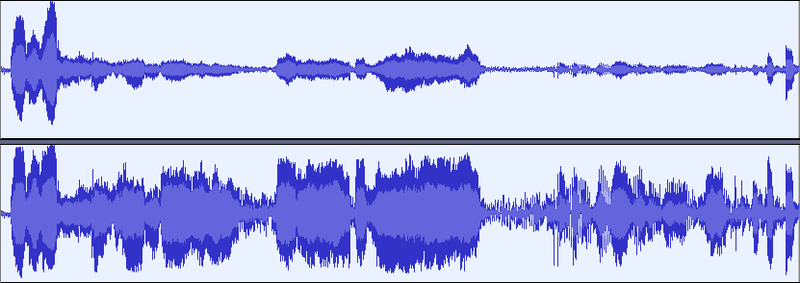

In the next figure you will find an uncompressed and a compressed recording. Guess which is which?

Interestingly, the perceptual result of lowering the amplitude of the loudest parts is that the entire recording sounds louder. This is because the average amplitude goes up (if we use make-up gain, that is...), and is the root cause of the loudness war that's been going on since advanced compression algorithms became available in the 1990s.